TL;DR

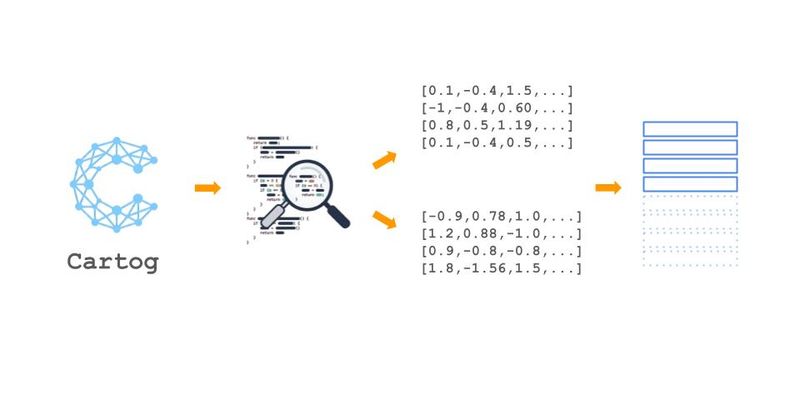

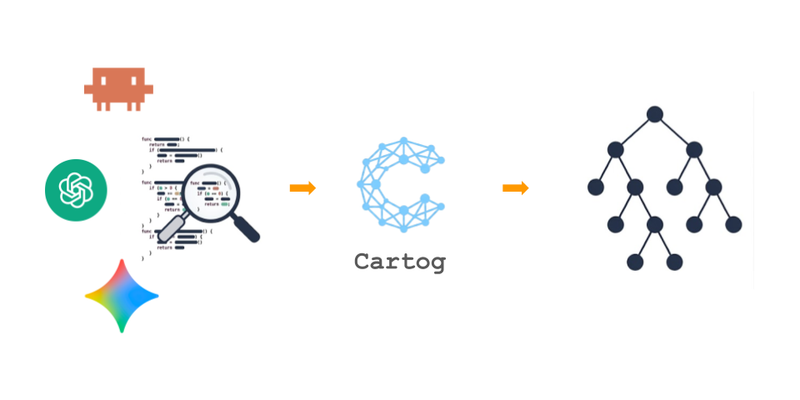

The code graph requires knowing the symbol name. To go further, cartog turns each symbol into an embedding with BGE-small (ONNX, CPU inference), combines FTS5 (BM25) with vector KNN via sqlite-vec, fuses results with RRF, then re-ranks with a cross-encoder. Everything runs locally by default, and config lets you swap the embedding provider or storage backend if needed. To try it out: documentation · source code.

The structural graph answers fast and accurately, as long as you know the symbol name.

What happens when the agent describes intent instead of an identifier?

As a reminder, cartog parses each file with Tree-sitter, extracts symbols (functions, classes, methods) and their relations (calls, imports, inheritance) from the AST, then stores everything in a SQLite graph.

This graph enables structural navigation: cartog refs UserService or cartog impact verify_token, provided you know the exact symbol name.

That is precisely the limit: an AI agent receiving “find the order validation logic” has no way to guess the function is called validate_order_items.

Neither grep nor an exact graph lookup will find that symbol from a natural-language description.

But how can a machine “understand the meaning” of a piece of code? Code isn’t made of words or sentences.

We need to search by meaning, not by text. That’s what semantic search enables. This article shows how cartog implements it, locally by default, without sending a single character to the cloud (nb: config leaves the door open to a remote provider if you want one).

How do we turn a function and an English question into something comparable, when one is code and the other is natural language?

The answer fits in one word: embedding. An AI model, trained on billions of text/code pairs, can project any sequence into a vector space with several hundred dimensions. Two texts with similar meaning land at nearby positions, even when they share no words. It’s what Google does when you search “how to fix a leaky sink” and it returns an article titled “residential plumbing repair”.

Cartog applies this idea to symbols: each function, class, and method in the graph is turned into a vector of 384 numbers. The natural-language query goes through the same model, and the closest symbols in vector space are returned.

flowchart LR

Q["'order validation'"] --> EQ["Embedding<br>query → vec 384d"]

S1["validate_order_items()"] --> ES1["Embedding<br>symbol → vec 384d"]

S2["process_payment()"] --> ES2["Embedding<br>symbol → vec 384d"]

EQ --> KNN["KNN<br>L2 distance"]

ES1 --> KNN

ES2 --> KNN

KNN --> R["validate_order_items ✓<br>(distance: 0.12)"]In theory, then, we take each function, send it to the model, store the vector.

Is that enough?

No. A naive embedding of raw source code tends to give mediocre results. Code contains noise (blank lines, formatting comments, closing braces) that dilutes the semantic signal.

Truncating a 200-line function to fit the model’s window throws away exactly the parts that carry intent.

If you send a 200-line function to the model, what does it actually keep? What about braces, blank lines, license headers, all that noise with no business meaning?

Rather than embedding raw code, cartog uses the AST structure to prepare an optimized text per symbol:

flowchart TD

SYM["Extracted symbol<br>validate_order_items()"] --> H["Header<br>signature + decorators"]

SYM --> B["Body<br>significant lines"]

H --> CHUNK["Embedding chunk<br>≤ 500 bytes"]

B --> CHUNK

CHUNK --> EMB["BGE-small embedding<br>384 dimensions"]

Concretely, the embedding text is built like this:

- Header: a normalized comment that includes the path, the kind, and the signature, e.g.

// File: auth/tokens.py | function validate_token.

Decorators (@login_required,#[derive(Debug)]) are kept: they carry semantic meaning. - Significant body: code lines, filtering out blank lines, purely formal comments, and isolated closing braces.

- Limits: ~800 bytes (~200 tokens) for the bi-encoder input, enough to capture “what this function does” while staying within the model’s 512-token window. 2048 bytes (~512 tokens) for the full content stored in the database, used by FTS5 and the re-ranker.

What is not embedded:

- Imports: they already exist as edges (import relations) in the graph.

- Simple variables: too many, low semantic signal.

Markdown documents follow a specific pipeline: they’re chunked by heading (each section becomes a symbol), with a paragraph-level sub-chunking for large sections (~1,500 bytes). Files without headings use fixed-size chunking.

OK, but who computes those embeddings? Do you need a GPU? An OpenAI API?

Neither, by default. Cartog uses BGE-small-en-v1.5, a small embedding model (~80 MB after quantization) downloaded once and run on CPU via ONNX Runtime. Exact parameters (dimensions, normalization, serialization) are in the cartog docs. No network call to compute a vector, no data leaving the machine.

That choice is deliberate. Cartog is a developer tool that runs on the developer’s machine: sending source code to an external service is not acceptable for many teams (NDAs, proprietary code, compliance). The trade-off: CPU inference is slower than a hosted API, but a few optimizations make up most of the gap (length-based batching, see “Gotchas”).

And what if you actually want a hosted API, or to store the graph somewhere other than a local SQLite?

The pipeline is configurable: the embedding provider (local ONNX model, remote API) and the storage backend (local SQLite, shared database) can be swapped without touching the rest of the chain. The local-first default protects proprietary code; the override unlocks team setups (shared graph in CI, hosted embedding for GPU inference).

If embeddings capture meaning, why keep keyword search (FTS5) in the loop?

Because semantic search alone misses exact matches. When the agent searches for validate_order_items (an exact name it saw in a trace or an error message), a plain text match is faster and more reliable than a vector distance computation. Conversely, when it searches for “the order validation logic”, text matching returns nothing.

Execution cost also favors keyword as the first step: keyword search over symbol names answers in sub-millisecond, while semantic search (query embedding + KNN) lands around 300 ms. If we can skip the semantic call, we do.

The two approaches complement each other. Cartog runs them in parallel and fuses the results:

flowchart TD

Q["User query"] --> FTS["FTS5 keyword<br>BM25 ranking"]

Q --> VEC["Vector KNN<br>sqlite-vec"]

FTS --> RRF["Reciprocal Rank Fusion<br>k=60"]

VEC --> RRF

RRF --> TOP["Top candidates"]

TOP --> RE["Cross-encoder<br>re-ranking"]

RE --> RES["Final results"]What do FTS5 and BM25 change compared to a plain SQL

LIKE '%validate%'?

SQLite FTS5 is the full-text index built into SQLite. It ranks results with BM25, an algorithm that weights terms by their rarity in the corpus: a common word like “validate” carries less weight than a rare one like “ledger”.

Cartog queries FTS5 with a cascading fallback to handle queries of different lengths:

| Level | FTS5 query | When |

|---|---|---|

| 1 | "validate order items" (exact phrase) | Adjacent match |

| 2 | validate AND order AND items | All terms present |

| 3 | validate OR order OR items | At least one term |

The first level that returns results is kept. Search runs on the symbol name, on the normalized name (camelCase → separated words, so validateOrderItems also matches “validate order items”), and on the full content.

KNN, L2 distance, cosine: how does SQLite actually find the closest vectors to a query out of thousands?

The user query is embedded with the same BGE-small model, then compared against the stored symbol vectors in sqlite-vec, a SQLite extension that adds native vector-search operators. Because vectors are normalized on output from the model, L2 distance produces the same ranking as cosine similarity, while being faster to compute.

We have two lists ranked differently. How do we combine them without falling into arbitrary weight tuning?

Reciprocal Rank Fusion (Cormack et al., 2009) answers with a remarkably simple formula:

score(doc) = 1/(k + rank_keyword + 1) + 1/(k + rank_vector + 1) with k = 60rank is the document’s position in each ranked list (0-indexed). The final score is the sum over both lists. The trick: RRF completely ignores the value of the original scores (BM25 vs L2 distance, two incompatible scales) and looks only at position. A #1 document in a list always contributes 1/(60+1), whether its BM25 score is 12 or 0.3.

Practical consequences:

- A document well-ranked in both lists is promoted (signal reinforced).

- A document ranked #1 by keyword but absent from semantic still stays in the top.

- No weights to tune between keyword and semantic: the fusion is parameter-free.

Why a third stage? Hasn’t RRF already ranked what comes out of the first two?

RRF ranks on positions, not absolute relevance. For the top candidates, we can afford a more expensive but more accurate computation: the cross-encoder. Where the bi-encoder compares two vectors pre-computed independently, the cross-encoder takes the query and the document together and produces a relevance score by cross-attending their tokens. More accurate, but slower, hence the cascade filter: only the top 50 RRF candidates (configurable) are sent to the cross-encoder.

Cartog uses a BGE-reranker model (~1.1 GB on disk, larger than the embedding model but only used for the top of the list).

The re-ranker is optional: if it fails to load (model missing, ONNX error), search returns the RRF results without re-ranking. A 3-state cache avoids retrying a failed load:

stateDiagram-v2

[*] --> NotTried

NotTried --> Ready : load OK

NotTried --> Failed : ONNX error

Ready --> Ready : queries

Failed --> Failed : do not retryOn paper, the pipeline is clean. In practice, three details broke search before they were fixed.

False positives on imports: imports contain the names of imported symbols. Without filtering, from auth import validate_token got embedded and matched queries on validate_token, duplicating the real symbol. That’s what motivated excluding imports from the embedding (see “AST-aware chunking”).

Length-based batching: ONNX Runtime pads sequences to the batch’s longest sequence. Mixing 10-token symbols with 200-token symbols wastes compute (BERT attention is O(n²) in length, so padding gets expensive). Sorting by length before batching reduces padding and speeds up inference.

UTF-8 safety: source code sometimes contains multi-byte characters (Chinese, Japanese, Korean comments, emoji in strings). Truncating to ~800 bytes can cut mid-character. Cartog uses a byte-safe truncation that backs up to the nearest character boundary.

At this point, cartog combines two building blocks:

- A structural graph (article 1): symbols and edges extracted by tree-sitter, queryable by exact name (

refs,callees,impact). - A hybrid semantic search (this article): FTS5 for names and exact matches, vector KNN for meaning, RRF to fuse them, cross-encoder to re-rank the top.

The agent can now find a function it knows by name or by intent, locally, in a few hundred milliseconds. Detailed technical parameters in the cartog docs.

But graph quality still depends on one thing we haven’t fixed: edge precision. The article 1 heuristic resolves 25-37% of calls. When the agent asks “which functions call

validate_order_items?”, it misses two thirds of the callers. How do you get to 80% without reimplementing a compiler?

That will be the topic of the next article: wiring cartog into language servers (LSP) to get IDE-level precision.